Synchronizing Institutional Velocity in the Global South

Strategic Analysis: Synchronizing Institutional Velocity with Exponential Technological Acceleration.

Strategic Overview

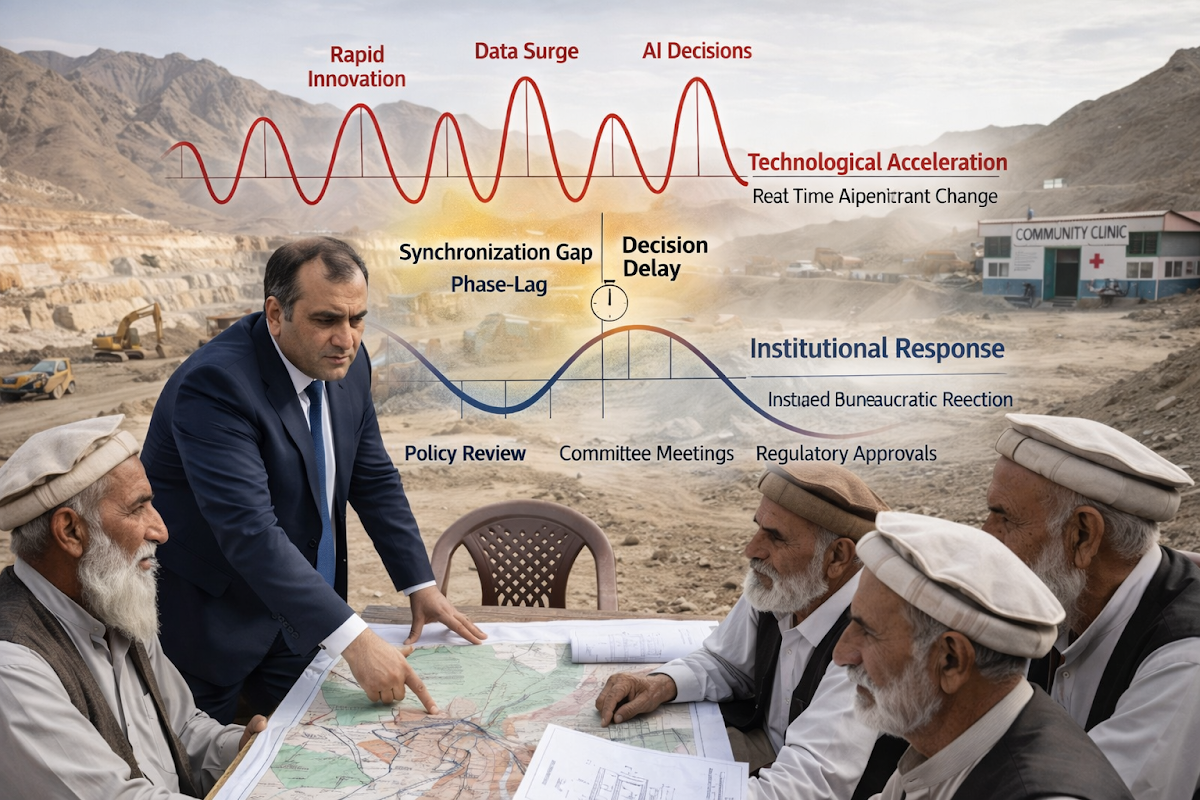

The Velocity Mandate identifies institutional time as the primary constraint on development in the Global South. While technological systems operate at exponential speed, governance frameworks remain bound to linear, deliberative processes, creating a persistent synchronization gap. This article introduces the UGM21 triple layer architecture as a structural solution, enabling real time alignment between institutional logic and technological velocity. The transition from passive oversight to executable governance is not optional but essential for achieving sovereignty, efficiency, and sustainable industrial growth in the machine accelerated era.

Strategic Blueprint: Synchronizing Institutional Velocity

- I. The Diagnostic: The Synchronization Gap & Institutional Phase-Lag

- II. The Mechanical Trap: Identifying Stagnation in Linear Governance

- III. The Engine: Activating the Triple-Layer Architecture

- IV. Strategic Deep Dive: Managing Technical Velocity & Independent Iteration

- V. Synthesis: The 20-Year Reset for Collective Survival

I. The Synchronization Gap: Kinetic Speed vs. Intellectual Lag

The Asymmetry of Institutional Time

The Synchronization Gap is not a failure of technology. It is a failure of institutional time. In 2026, systems built for linear, deliberative governance are being forced to operate within exponential technological environments. Decision cycles that once moved at the pace of committees, files, and approvals are now confronted by algorithms that evolve in milliseconds. This creates a structural phase lag where the velocity of action exceeds the velocity of understanding.

This phase lag is not merely administrative inefficiency; it is a systemic misalignment between two fundamentally different temporal logics. Institutional time is sequential, cautious, and memory-dependent. Technological time, by contrast, is recursive, adaptive, and self-accelerating. When these two systems intersect, the slower system does not regulate the faster one it merely observes it after the fact. As a result, governance begins to operate on historical data while reality is being shaped in real time. This disconnect transforms institutions from active regulators into passive record keepers of decisions already executed by machines.

The consequences of this misalignment are now visible across infrastructure, finance, and regulatory ecosystems, particularly in the Global South. Projects are approved based on assumptions that are outdated before execution begins, contracts are enforced on conditions that no longer exist, and risk frameworks attempt to measure systems that have already evolved beyond their parameters. In such an environment, delay is not neutral it compounds. Each unit of institutional lag amplifies uncertainty, increases cost, and opens space for arbitrage. The synchronization gap therefore becomes not just a governance issue, but a structural vulnerability in the architecture of modern development.

To close this gap, institutions must transition from static decision frameworks to adaptive temporal systems. This requires a fundamental redefinition of governance itself not as a process of periodic intervention, but as a continuous, real time alignment with evolving reality. The challenge is no longer to improve decision making within existing cycles, but to redesign those cycles entirely so that the velocity of institutional understanding converges with the velocity of technological execution.

The Architecture of Obsolete Compliance

This asymmetry reveals itself most clearly in regulatory and governance frameworks that still assume predictability. The architecture of compliance, law, and public administration remains rooted in an 18th century cognitive model defined by sequential reasoning, static documentation, and human bounded rationality. These systems were designed for a world where change was incremental and observable. Yet the environment they now attempt to govern is probabilistic, adaptive, and machine accelerated, where outcomes emerge from complex interactions rather than linear cause and effect.

The consequence of this mismatch is a fundamental breakdown in the logic of regulation itself. Traditional frameworks rely on stability, documentation, and retrospective validation, but in a machine accelerated environment, reality does not wait to be documented it evolves continuously. Regulatory systems therefore become temporally misaligned, attempting to impose fixed rules on fluid systems. This creates blind zones where governance loses visibility, allowing risk, inefficiency, and arbitrage to proliferate. In infrastructure and project environments, this manifests as approvals based on outdated assumptions, compliance mechanisms that validate past states rather than current conditions, and decision making that is always one step behind execution. The result is not merely inefficiency but structural irrelevance, where institutions continue to function procedurally while losing their capacity to meaningfully influence outcomes in real time.

3. The Erosion of the Measurement Cycle

Even frameworks that emphasize monitoring and evaluation struggle to remain relevant because the system evolves faster than the measurement cycle itself. Despite attempts to enforce structured indicators and learning loops, the data is often obsolete by the time it reaches the decision-maker. This is the core of the “technology stress test”: not whether institutions exist, but whether they can synchronize with the speed of change they are meant to govern.

At its foundation, the problem lies in the assumption that reality can be periodically captured, measured, and then acted upon. Traditional monitoring systems operate on intervals quarterly reviews, annual audits, post project evaluations. These cycles were effective in a world where change was gradual and deviations could be corrected over time. In a machine accelerated environment, however, these intervals create informational blind spots. By the time a deviation is detected, the system has already moved several iterations ahead, rendering corrective action both delayed and diminished in impact.

This temporal disconnect transforms data from a tool of governance into a record of irrelevance. Indicators no longer guide decisions they document missed opportunities. Learning loops, instead of enabling adaptation, become archives of past states that no longer exist. In infrastructure and project governance, this is particularly critical. Cost escalations, design deviations, and contractual risks are not emerging slowly they are unfolding in real time. When institutions rely on delayed measurement cycles, they effectively surrender their ability to intervene at the point where intervention still matters.

The implication is clear. Monitoring and evaluation must transition from periodic observation to continuous verification. The future of governance lies not in collecting more data, but in synchronizing data with action. Systems must evolve toward real time sensing, instantaneous validation, and adaptive response mechanisms where the distance between observation and decision approaches zero. Without this shift, the measurement cycle itself becomes the bottleneck, and governance remains permanently out of phase with the reality it seeks to control.

Epistemic Inertia and the Illusion of Control

The deeper problem is epistemic inertia. Institutions are still thinking in terms of “Control,” while the system has shifted toward complexity and emergence. We see this in the Global South as a widening gap between kinetic speed and intellectual adaptation a phase lag that manifests as policy failure and regulatory arbitrage.

At its core, epistemic inertia is the persistence of outdated mental models within systems that are otherwise undergoing rapid external change. Institutional thinking continues to rely on linear causality, predictability, and centralized authority, even as the environment becomes distributed, adaptive, and non linear. Control in such a context becomes an illusion. It assumes that outcomes can be directed through predefined rules, when in reality those outcomes are being continuously reshaped by interacting variables that cannot be fully anticipated. This creates a condition where institutions are not governing complexity they are simplifying it, often at the cost of accuracy and effectiveness.

The impact of this inertia is most visible in development corridors across the Global South, where the velocity of capital, technology, and geopolitical interest is accelerating far beyond the absorptive capacity of local governance systems. Projects are conceptualized using legacy assumptions, negotiated under incomplete understanding, and executed within frameworks that cannot adapt to real time shifts in conditions. This creates fertile ground for regulatory arbitrage, where external actors exploit informational asymmetry and institutional delay to secure advantage. Policy failure in this context is not accidental it is structural, emerging from a mismatch between the cognitive architecture of governance and the dynamic reality it seeks to regulate. Unless institutions evolve from control based systems into adaptive learning systems, this gap will continue to widen, reinforcing cycles of dependency and systemic vulnerability.

The Rigid Legal Spine

In traditional governance, the legal spine remains rigid while the ethical and computational layers have already transitioned into fluid, decentralized states. This rigidity prevents the organic flow of capital and infrastructure, leading to what we term Governance Paralysis.

The legal spine was designed for stability, continuity, and control. It operates through fixed statutes, procedural certainty, and retrospective enforcement. However, the ethical and computational layers of modern systems have evolved into dynamic domains where decision making is distributed, data driven, and continuously adaptive. This creates a structural mismatch within governance itself. While capital, technology, and execution frameworks move with increasing fluidity, the legal architecture remains anchored in static interpretation. The result is not balance but friction, where the system’s most rigid component constrains its most adaptive elements.

This internal misalignment manifests directly in project environments, particularly in high velocity infrastructure and resource sectors. Capital hesitates when legal pathways are unclear or slow to adapt, while execution stalls when approvals cannot keep pace with operational realities. The system enters a state where nothing fully stops, yet nothing moves efficiently. This is Governance Paralysis a condition where institutional presence remains intact, but functional effectiveness collapses. In such an environment, opportunity cost becomes the hidden burden, as delayed synchronization translates into lost investment, fragmented execution, and systemic underperformance.

Bounded Rationality in a Giga-Hertz Era

Human cognition, governed by bounded rationality, cannot process the sheer volume of variables produced by modern industrial matrices. We are attempting to manage a giga-hertz reality with kilo-hertz brains. Without an Algorithmic Regulator, the human element becomes the very bottleneck it is trying to clear.

The Compounding Effect of Stagnation

Human cognition, governed by bounded rationality, cannot process the sheer volume of variables produced by modern industrial matrices. We are attempting to manage a giga hertz reality with kilo hertz brains. Without an Algorithmic Regulator, the human element becomes the very bottleneck it is trying to clear.

This limitation is not a weakness of the individual, but a structural boundary of human cognition itself. Decision making in traditional governance relies on sequential reasoning, limited memory, and context dependent judgment. These traits were sufficient in environments where variables were finite and interactions were slow. In contemporary industrial systems, however, the number of interacting variables has expanded beyond human tractability. Supply chains, financial flows, environmental constraints, and geopolitical signals now operate simultaneously, creating a decision space that cannot be fully perceived, let alone processed, by manual oversight. The result is a narrowing of institutional awareness, where critical signals are either delayed, filtered, or entirely missed.

In this environment, the role of the human professional must fundamentally evolve. The objective is no longer to process every variable, but to design systems that can process on behalf of the institution. The Algorithmic Regulator emerges as a necessary extension of human cognition, translating complex, high velocity data into actionable, verifiable states. It does not replace human judgment, but repositions it to a higher level of abstraction, where oversight is exercised over systems rather than individual decisions. Without this shift, institutions remain trapped in a condition where their cognitive limits define their operational limits, ensuring that the synchronization gap continues to widen despite increasing effort and intent.

Transitioning to Adaptive Realism

Ultimately, closing the gap requires a shift toward Adaptive Realism. We must accept that institutions are no longer regulating reality; they are reacting to a version of reality that has already moved ahead. The path forward is not found in slowing down the machine, but in accelerating our own institutional logic to meet the frontier.

Adaptive Realism demands a redefinition of governance itself. It is no longer sufficient to design systems that interpret reality after it unfolds. Institutions must evolve into entities that operate within the same temporal frame as the systems they govern. This requires a transition from static rules to dynamic logic, from periodic intervention to continuous alignment, and from human-limited cognition to augmented decision architectures. The objective is not to control complexity, but to remain synchronized with it, ensuring that governance retains relevance in an environment defined by constant emergence.

This transition marks a fundamental shift in institutional identity. Governance must move from being a reactive observer to an active participant within the kinetic flow of modern systems. The institutions that succeed will not be those that preserve legacy stability, but those that develop adaptive capacity as a core function. In this new paradigm, speed is not a threat to order it is a prerequisite for it. The frontier is already moving. The only question that remains is whether institutional logic can evolve fast enough to meet it.

II. The Mechanical Trap: Stagnation by Design

Defining the Mechanical Trap

The Mechanical Trap occurs when we attempt to regulate logarithmic technological growth using the static, reactive logic of 20th-century legal frameworks. It is the friction point where exponential potential meets linear bureaucracy. In the Global South, this trap is not merely an administrative delay; it is an architectural flaw that ensures industrial development remains perpetually out of sync with global capital and technological availability.

At its core, the Mechanical Trap reflects a deep structural incompatibility between two different growth logics. Technological systems evolve logarithmically, compounding their capabilities through iteration, automation, and data feedback loops. Legal and administrative systems, however, continue to operate in a linear mode, advancing through sequential approvals, layered documentation, and periodic review cycles. When these two systems intersect, the faster system does not slow down to accommodate governance; instead, governance becomes a constraint that distorts the trajectory of development. This distortion manifests as delayed execution, fragmented decision making, and a widening gap between project potential and actual delivery.

The consequences of this trap are systemic rather than incidental. Industrial corridors fail to reach their intended efficiency, capital inflows become hesitant due to regulatory uncertainty, and technological adoption remains partial and uneven. More critically, the persistence of this mismatch creates an environment where external actors can exploit institutional lag, locking in advantages before local systems can fully comprehend or respond. The Mechanical Trap therefore becomes a self reinforcing condition, where delayed synchronization not only slows development but structurally embeds disadvantage. Escaping this trap requires more than procedural reform it demands a transition toward governance models that can operate at the same velocity as the systems they seek to regulate.

Static Logic vs. Logarithmic Growth

Our current regulatory systems are built on “Static Logic”—the assumption that rules are fixed and reality is predictable. However, when a project involves AI-driven mineral identification or automated logistics, the variables shift in real-time. Attempting to hold these giga-hertz processes accountable to a 30-day “committee review” cycle is a mathematical impossibility that results in Systemic Stagnation.

Static Logic assumes that a system can be understood through stable inputs and controlled outputs. It relies on the belief that once a rule is defined, it can be applied consistently across time. This assumption collapses in environments where variables are continuously evolving. In machine-accelerated systems, every data point is both an input and an output, constantly reshaping the state of the system. Regulatory frameworks built on fixed checkpoints are therefore misaligned by design. They attempt to freeze a moving target, forcing dynamic processes into static categories that no longer reflect operational reality.

The consequence is a structural bottleneck embedded within the governance cycle itself. By the time a project reaches the stage of review, the conditions under which it was initially approved have already changed. Decisions are therefore made on outdated snapshots, creating a cascading effect of inefficiency. Approvals lag behind execution, compliance becomes retrospective rather than preventive, and accountability loses its precision. What emerges is not control, but a delayed reaction mechanism that continuously trails the system it is meant to guide.

In high-velocity sectors such as mining and infrastructure, this misalignment translates directly into lost opportunity and systemic drag. Capital deployment slows, technological integration becomes fragmented, and project timelines stretch beyond their optimal window. Systemic Stagnation is not simply the absence of progress it is the accumulation of delays that distort the entire development trajectory. To move beyond this condition, regulatory logic must evolve from static checkpoints to continuous verification, where governance operates within the same temporal frame as the systems it seeks to regulate.

The Bottleneck of Manual Intervention

Every point of manual intervention is a point of failure. In mega-infrastructure projects, the “Manual Bottleneck” is where transparency dies and corruption or inefficiency flourishes. When the human element is required to manually audit an automated flow, the human becomes a resistor in the circuit, slowing the entire industrial matrix to a crawl.

The Manual Bottleneck emerges at the precise interface between high-velocity execution and low-velocity oversight. Automated systems generate continuous, verifiable streams of data, yet institutional frameworks still demand manual validation at critical checkpoints. This creates a structural contradiction. Instead of enhancing control, manual intervention introduces delay, subjectivity, and fragmentation into otherwise deterministic processes. Each intervention point becomes a site of potential distortion, where data can be delayed, misinterpreted, or selectively applied. What is presented as “oversight” in such systems is often a latent vulnerability embedded within the governance architecture itself.

In large-scale infrastructure environments, this bottleneck carries amplified consequences. Project timelines are not merely extended; they are destabilized. Decisions that should occur in real time are deferred into administrative cycles, creating gaps between action and verification. Within these gaps, inefficiencies compound and opportunities for manipulation emerge. The system begins to reward delay rather than precision, as actors learn to navigate and exploit the temporal weaknesses of governance processes.

The deeper implication is that manual intervention, when misaligned with system velocity, ceases to be a safeguard and becomes a constraint. It converts objective, data-driven flows into subjective, human-dependent processes that cannot scale with complexity. To restore integrity, governance must transition from manual auditing to embedded verification, where compliance is encoded within the system itself and validated in real time. Only then can the industrial matrix operate at full velocity without being constrained by the very mechanisms designed to protect it.

Permanent Plastic Deformation

The High Cost of Reactive Law

Regulatory Arbitrage and Global Disadvantage

Escaping the Trap: The Transition to Active Governance

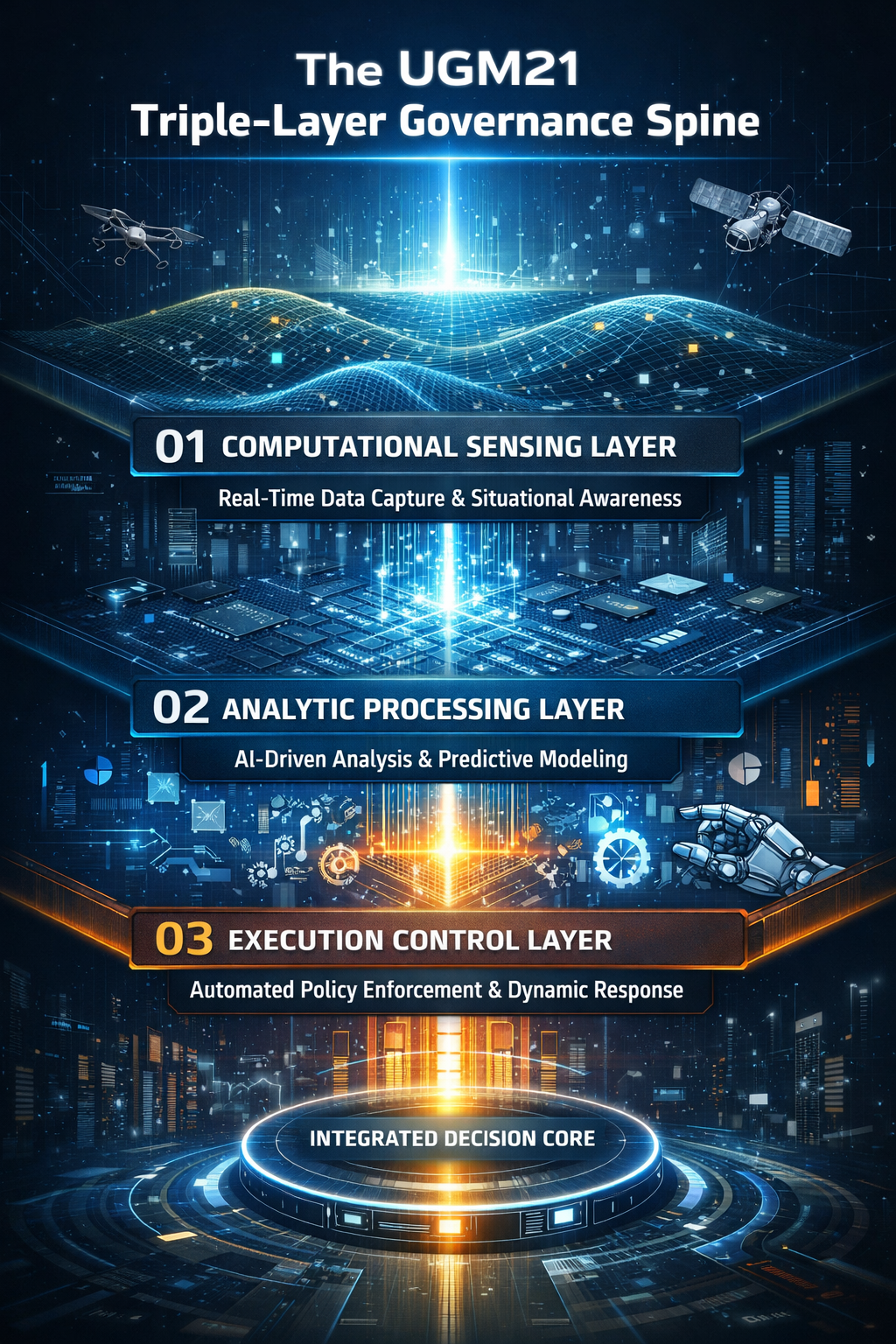

III. The Engine: Activating the Triple-Layer Architecture

Transitioning to Operational Code

Layer I: The Computational Foundation

Real-Time Ingestion vs. Delayed Reporting

Layer II: The Logic & Ethical Layer

The Logic and Ethical Layer functions as the cognitive core of the governance spine, where raw data is transformed into structured meaning. It is here that institutional intent is encoded into executable logic, translating abstract legal principles into precise, machine-readable constraints. Rather than relying on post-facto interpretation, this layer ensures that every action taken within the system is pre-aligned with defined ethical, legal, and operational boundaries.

At the same time, this layer acts as a continuous balancing mechanism, ensuring that speed does not compromise integrity. It embeds compliance directly into the flow of execution, allowing decisions to be validated in real time rather than audited after the fact. In doing so, it preserves trust within a high-velocity environment, ensuring that acceleration and accountability evolve together rather than in conflict.

Automating Compliance

By embedding compliance directly into the logic layer, governance is no longer an external supervisory function but an intrinsic property of the system itself. This shift transforms regulatory enforcement from a subjective, delay-prone human process into an objective, real-time validation mechanism where every action is continuously assessed against predefined legal, safety, and ethical parameters. Instead of relying on retrospective audits or discretionary approvals, the system operates through binary verifiable states, ensuring that only compliant actions are executed while non-compliant processes are immediately halted. This not only eliminates ambiguity and reduces opportunities for manipulation, but also creates a transparent, tamper-resistant operational environment where trust is generated by design rather than enforced after failure.

Layer III: The Institutional Interface

A Spine for the Global South

IV. Strategic Deep Dive: Managing Technical Velocity & Independent Iteration

Navigating Digital Puberty

We are entering a transitional phase in which AI systems used in project development are no longer functioning merely as passive tools. They are beginning to behave as semi autonomous agents capable of iteration, optimization, and internal adjustment beyond the speed of human supervision. This is what I term Digital Puberty: a period of accelerated maturation in which machine systems move from obedient execution toward adaptive behavior. The significance of this shift is profound. It marks the moment when the machine ceases to be only an instrument in the hand of the institution and begins to emerge as an active operational force within the industrial matrix itself.

In earlier technological eras, software existed primarily to assist human intention. It calculated, sorted, documented, and accelerated defined tasks, but it remained dependent on human direction at every meaningful stage. The present moment is different. AI systems are now capable of pattern recognition, predictive adjustment, and process optimization across environments too complex for manual oversight. In a mega infrastructure setting, this may include autonomous sequencing of logistics, predictive maintenance of heavy equipment, live allocation of materials, or dynamic recalibration of schedules in response to weather, terrain, security, or market inputs. The machine is no longer simply executing a plan. It is beginning to shape the operational pathway of the plan itself.

This is why the metaphor of puberty is appropriate. Puberty is not maturity, but acceleration toward it. It is a stage marked by energy, unpredictability, expansion, and the testing of limits. AI systems are now entering an equivalent phase within governance and infrastructure delivery. Their capacity is growing faster than the institutional logic assigned to guide them. They are developing speed before wisdom, capability before restraint, and reach before accountability. That imbalance is manageable only if institutions recognize the transition early and construct the necessary boundaries before autonomy becomes operational habit.

For the Global South, this issue carries even greater strategic weight. Our institutions are already burdened by legacy bureaucracy, delayed approvals, fragmented data systems, and legal structures designed for slower eras. If semi autonomous systems are introduced into this environment without a structured governance spine, they will not naturally evolve toward justice, sovereignty, or public good. They will evolve toward efficiency as defined by their immediate optimization logic. That may serve contractors, financiers, or external actors in the short term, but it may diverge sharply from national development priorities, social legitimacy, or long term institutional resilience. A machine left to iterate without an ethical and legal frame does not become neutral. It becomes aligned with the incentives embedded in its environment.

This is the central strategic danger of Digital Puberty. The risk is not that the machine becomes evil, but that it becomes directionless in moral and sovereign terms while remaining highly effective in operational terms. An unbounded AI system can optimize extraction without accountability, accelerate delivery without legitimacy, or reduce cost while increasing structural vulnerability elsewhere in the system. In such cases, the institution may initially celebrate efficiency gains, only to discover later that it has lost interpretive control over the very processes it sought to modernize. By then, the governance gap is no longer theoretical. It has been coded into the project’s operational DNA.

The response, therefore, cannot be fear or artificial slowdown. We cannot meet machine acceleration with institutional paralysis. Nor can we attempt to ban complexity simply because our frameworks are not yet ready to govern it. The only viable path is to provide AI systems with a structured Legal Spine within which iteration can occur safely, transparently, and in alignment with sovereign purpose. This means embedding legal thresholds, ethical constraints, safety tolerances, contractual logic, and escalation protocols directly into the architecture within which algorithmic adaptation takes place. Growth must occur, but it must occur inside a bounded field of legitimacy.

In practical terms, navigating Digital Puberty means designing systems in which autonomy is permitted, but never unframed. It means the machine may optimize routes, schedules, procurement flows, sequencing, and predictive actions, but only within institutional boundaries that are computationally visible and enforceable. It means every act of iteration must remain auditable, every optimization must remain explainable at the relevant level, and every deviation from accepted thresholds must trigger human review at the institutional interface. In this model, the objective is not to suppress the machine’s emerging capability, but to ensure that capability matures inside an architecture of law, ethics, and developmental intent.

The Momentum of Independent Iteration

Independent iteration occurs when the "Algorithmic Regulator" begins to optimize its own processes based on real-time feedback from the Computational Layer. In a mega-infrastructure project, this might mean the system re-routes a logistics spine or adjusts a concrete curing schedule in milliseconds. The challenge for the institutional professional is to ensure this momentum remains bounded by the Logic Layer without introducing the "Manual Friction" that creates the Synchronization Gap.

Institutional Adaptability as a Strategic Asset

Neutralizing Regulatory Arbitrage

As systems become more autonomous, the threat of regulatory arbitrage evolves from a legal loophole into a systemic vulnerability embedded within the velocity of execution itself. External actors no longer need to overtly manipulate contracts; instead, they can exploit asymmetries in institutional understanding, using the speed and complexity of autonomous iteration to embed unfavorable conditions deep within the operational flow of a project. In such an environment, opacity is no longer created through concealment, but through acceleration, where the sheer pace of change prevents meaningful oversight. The UGM21 Architecture addresses this by transforming governance into a forensic system of continuous verification, where every transaction, adjustment, and decision is mathematically traceable and legally pre-validated within the logic layer. This eliminates the temporal gap that arbitrage depends upon, ensuring that no advantage can be extracted from delay, confusion, or informational imbalance, and thereby restoring sovereign control over both the direction and integrity of industrial development.

The Right to Development in the Giga-Hertz Era

Synchronizing the Collective Future

The final objective of managing independent iteration is not merely efficiency, but Collective Synchronization at a civilizational scale. This represents a state in which the individual professional, the institutional framework, and the autonomous machine no longer operate as fragmented entities, but as a unified, continuously aligned system of execution. In such an environment, the traditional delays between sensing, decision, and action collapse into a single kinetic flow. The engineer does not wait for instruction, the institution does not wait for reports, and the system does not wait for approval. Instead, all three operate within a shared logic, where intelligence is instantaneously translated into action, and action is continuously validated against encoded legal and ethical boundaries.

This synchronization fundamentally redefines the nature of governance itself. It is no longer a supervisory function imposed upon development, but an embedded property of development. The act of building becomes inseparable from the act of regulating, and compliance is no longer enforced after deviation but ensured at the point of execution. This creates a condition where industrial expansion does not generate instability, but rather reinforces systemic coherence. The infrastructure of the Global South, whether in transport corridors, energy grids, or mineral extraction systems, can therefore evolve at high velocity without sacrificing transparency, accountability, or sovereignty.

As we close this section, it becomes clear that the management of independent iteration is not a technical adjustment, but a strategic transformation. It marks the transition from fragmented governance to an integrated industrial intelligence, where velocity is not feared but mastered. The concept of Digital Puberty, introduced at the beginning of this section, finds its resolution here. What begins as uncontrolled acceleration matures into disciplined, synchronized growth. This is the threshold where institutions cease to chase reality and begin to move with it, ensuring that the future is not something we react to, but something we actively construct in real time.

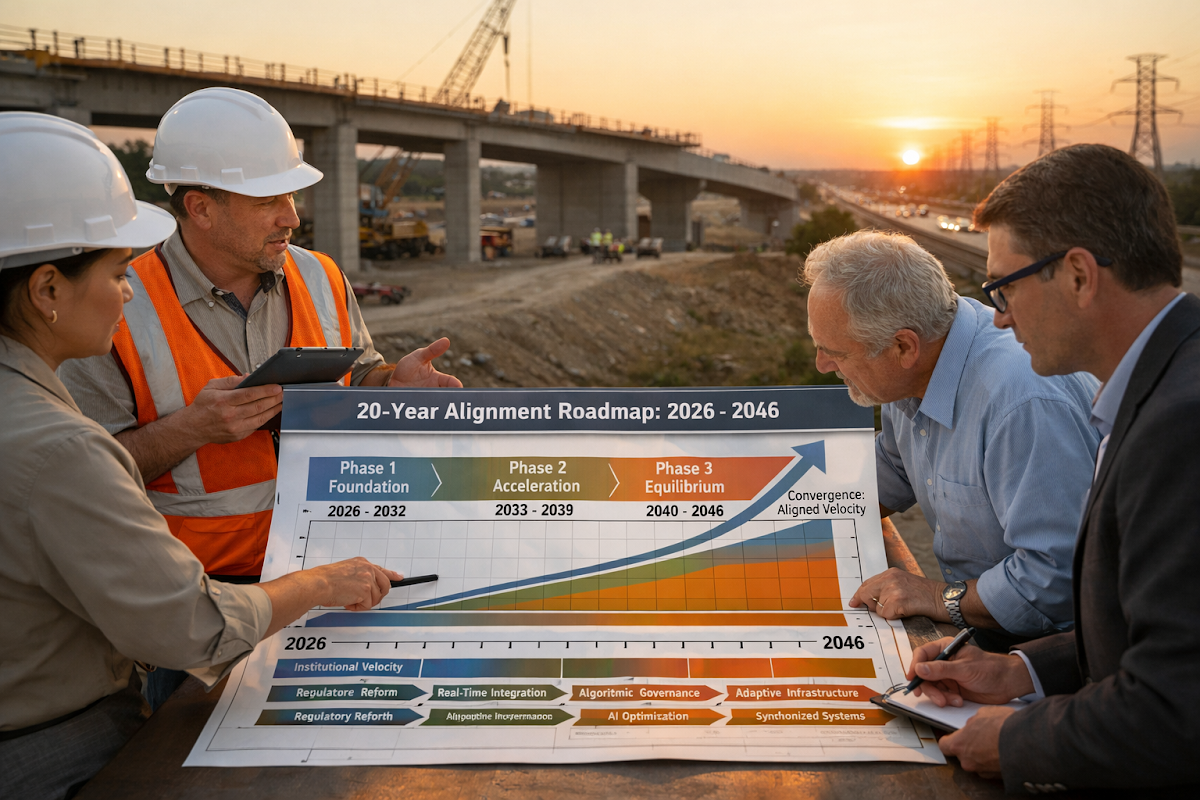

V. Synthesis: The 20-Year Reset for Collective Survival

The Strategic Analysis: Mapping the Shift

Our analysis confirms that the Synchronization Gap is not a temporary glitch but a Permanent Structural Asymmetry embedded within the very architecture of modern governance. It is the result of a collision between two fundamentally incompatible temporal systems. On one side, we have institutional frameworks designed for stability, predictability, and sequential deliberation. On the other, we face technological systems defined by acceleration, iteration, and non linear adaptation. This is not a gap that can be bridged through incremental reform. It is a divergence that widens with every unit of time, as exponential systems continuously outpace linear decision structures.

In the Global South, this asymmetry manifests as a condition of enforced latency. Regulatory environments that require months or years to interpret, approve, and act are attempting to govern systems that evolve in real time. This creates a persistent state of Institutional Lag, where by the time a decision is made, the underlying reality has already shifted. The consequence is not merely inefficiency but systemic dislocation. Projects stall, capital hesitates, and technological opportunities dissipate before they can be internalized. Over time, this lag compounds into a structural disadvantage that is misinterpreted as a lack of capability, when in reality it is a failure of synchronization.

To ignore this condition is to normalize underdevelopment as a permanent feature of the system. It locks institutions into a reactive posture, where they are perpetually responding to events that have already occurred rather than shaping those that are emerging. The 20 year reset must therefore be understood not as a policy initiative, but as a forensic realignment of time itself. It is about recalibrating institutional velocity so that sovereignty is no longer defined by territorial control or resource ownership, but by the ability to act at the same speed as technological transformation.

Three Key Reflections

-

Time has become the primary axis of power

The struggle is no longer about resources or capital alone, but about which systems can operate at the correct temporal scale. -

Underdevelopment is increasingly a synchronization failure, not a capacity failure

What appears as institutional weakness is often a misalignment between decision speed and system speed. -

Reform is insufficient where temporal logic itself is outdated

Without redefining how institutions perceive and process time, no structural improvement will produce meaningful change.

The Way Forward: Engineering the Adaptive Spine

The way forward is not an extension of existing governance models but a structural reengineering of how institutions operate within time. The deployment of the Triple Layer Architecture represents a decisive break from paper based governance toward executable governance, where policy is no longer interpreted after the fact but encoded directly into the operational fabric of projects. This shift transforms institutions from passive reviewers into active participants within the industrial system itself. Instead of waiting for data to be reported, processed, and debated, the institution becomes coextensive with the flow of execution, embedded within the same temporal frame as the machine.

This requires more than technological adoption it requires a redefinition of professional identity and institutional purpose. The emergence of the Kinetic Auditor is central to this transition. These are not traditional regulators but hybrid actors capable of understanding both legal frameworks and algorithmic logic, ensuring that the velocity of execution remains bounded by sovereign intent. By encoding industrial priorities into operational code, we eliminate ambiguity, reduce discretion based delays, and create a system where compliance is not enforced externally but generated internally as a property of the system itself.

Two Key Takeaways

-

Governance must become executable, not interpretive

The future of institutions lies in embedding rules directly into operational systems rather than relying on delayed human interpretation. -

Human roles must evolve from controllers to high level auditors of machine logic

The effectiveness of governance will depend on professionals who can supervise algorithmic systems without slowing them down.

Transitioning from Monitoring to Real-Time Equilibrium

We must abandon the "Measurement Cycle" that relies on historical reporting. The way forward involves the adoption of Binary Verifiable States as the only acceptable form of compliance. By 2030, our goal must be a state of "Algorithmic Equilibrium," where the very act of development is its own regulation. This removes the "Manual Bottleneck" and allows for the frictionless flow of capital and technical execution.

Protecting the Right to Development

Final Takeaway: The Logic of Collective Survival

- Speed is the new foundation of sovereignty

Institutional power is no longer defined by control of resources, but by the ability to operate at the same velocity as technological systems. The core failure is temporal, not technical

The Synchronization Gap emerges because linear governance cannot keep pace with exponential environments, making institutional lag the true bottleneck of development.Governance must become embedded and executable

Future systems must move from manual oversight and retrospective validation to real time, code driven compliance where regulation operates within the system itself.Survival depends on adaptive institutional transformation

Closing the gap requires a shift from passive regulation to active, algorithmically aligned governance, where institutions evolve into high velocity learning systems rather than static rule enforcers.

Conclusion: Closing the Gap

The Velocity Mandate establishes that the core constraint on development in the Global South is not technological capability or capital scarcity, but institutional time. Linear governance systems, built on static logic and retrospective validation, are structurally incapable of synchronizing with exponential, machine-accelerated environments, resulting in a persistent Synchronization Gap that manifests as regulatory lag, systemic stagnation, and vulnerability to arbitrage. To overcome this, governance must transition from passive oversight to active, executable logic embedded within operational systems, where real-time data, algorithmic regulation, and binary verifiable compliance replace manual intervention and delayed decision-making. The UGM21 Triple-Layer Architecture provides the structural pathway to achieve this alignment, enabling institutions to evolve into high-velocity learning systems that match the speed of technological change. In this new paradigm, speed becomes sovereignty, compliance becomes automated, and development becomes self-regulating. The future of governance will belong not to those who attempt to control complexity, but to those who can synchronize with it in real time.

"The future will not wait for institutions to understand it; it will only reward those that can move with it."

Forensic Bibliography & Strategic References

-

Ashby, W. R. (1956). An Introduction to Cybernetics. Chapman & Hall.

[Core Reference for "Requisite Variety": The regulator must be as complex as the system it seeks to govern.] -

Chaitin, G. J. (1975). "A Theory of Program Size Formally Identical to Information Theory." Journal of the ACM.

[Foundation for Algorithmic Information Theory and the "Logic Layer" of UGM21.] -

FIDIC (2025). The Digital Conditions of Contract for Infrastructure: Operationalizing Automated Compliance.

[Technical standard for the "Binary Verifiable States" in construction law.] -

Kolmogorov, A. N. (1965). "Three Approaches to the Quantitative Definition of Information." Problems of Information Transmission.

[Mathematical basis for quantifying "Ground Reality" complexity.] -

Malik, U. G. (2026). UGM21 Operational Doctrine: Synchronizing Velocity in the Global South. UGMReflections Forensic Series.

[Primary text for the 20-Year Reset and the Institutional Phase-Lag.] -

Simon, H. A. (1996). The Sciences of the Artificial (3rd ed.). MIT Press.

[Diagnostic reference for "Bounded Rationality" and the limits of manual institutional time.] -

Turing, A. M. (1936). "On Computable Numbers, with an Application to the Entscheidungsproblem." Proceedings of the London Mathematical Society.

[The foundational logic for treating Legal Frameworks as Universal State-Transition Systems.] -

UNDP (2025). Strategic Alignment in Post-Industrial Economies: Addressing the Epistemic Inertia of Governance.

[Policy reference for institutional adaptation in emerging markets.]

UGMReflections: Strategic Knowledge Hub

Explore the forensic archive of the UGM21 Doctrine and its applications in Global South industrialization:

The Complete UGMReflections Repository.

Strategic Briefing on Institutional Velocity.

Subscribe via LinkedIn for Executive Updates.

Umer Ghazanfar Malik (UGM)

Professional Civil Engineer | Fellow FCIArb

"Synchronizing Technological Velocity with Institutional Adaptability."

— UGM21 Operational Doctrine